About the Project

COG-MHEAR

An EPSRC-funded Programme Grant bringing together a multidisciplinary team of experts from seven UK universities to revolutionise hearing aid technology through multi-modal, cognitively-inspired approaches.

Our world-class consortium includes Edinburgh Napier University, University of Edinburgh, University of Glasgow, University of Wolverhampton, Heriot-Watt University, University of Manchester, and University of Nottingham.

The Challenge

Only 40% of people who could benefit from Hearing Aids have them. Social stigma and limited speech enhancement remain key barriers to adoption.

Our Solution

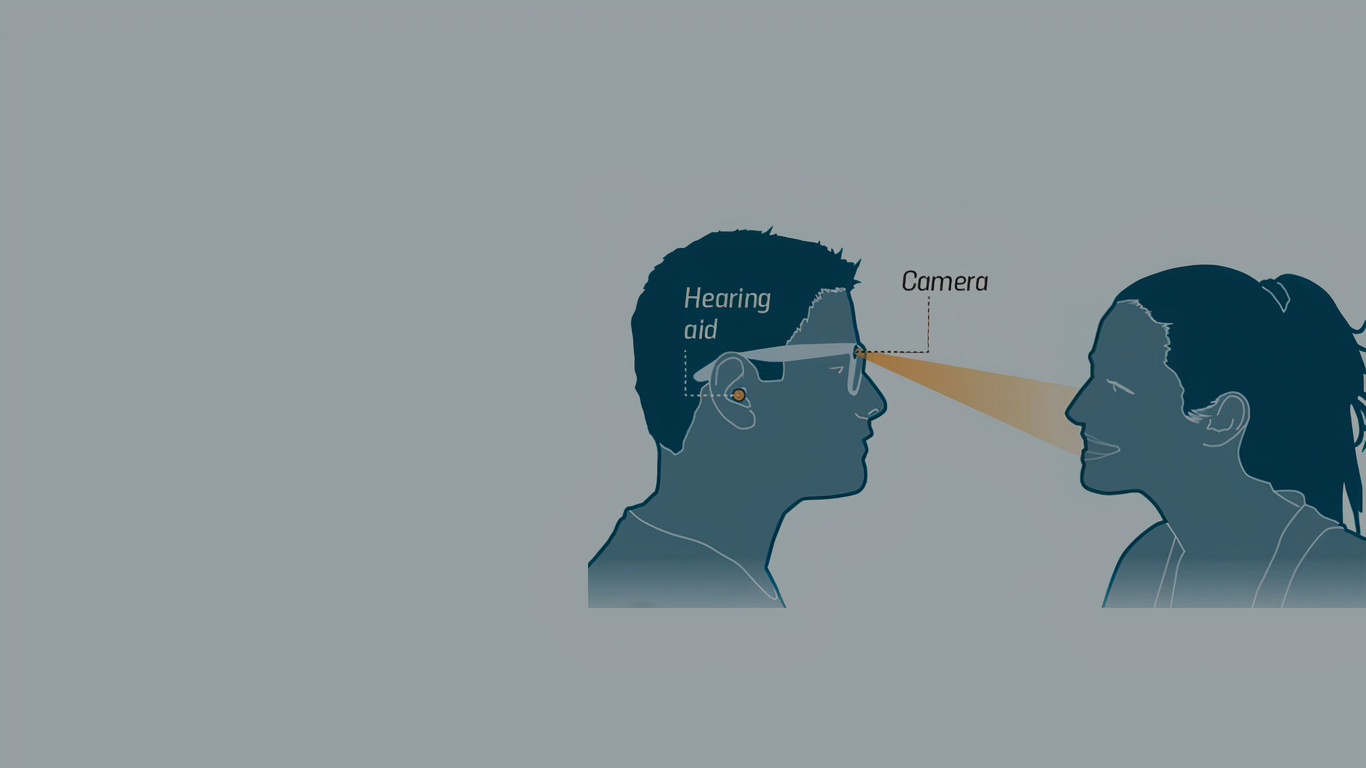

Multi-modal aids drawing on cognitive principles of normal hearing, combining audio and visual information to transform hearing care.

Innovation

Privacy-preserving encrypted video processing, radio signal-based lip reading, and dedicated low-power hardware implementations.

Collaboration

Our Partners